Fourier Image Similarity: From Paper to Open-Source Package

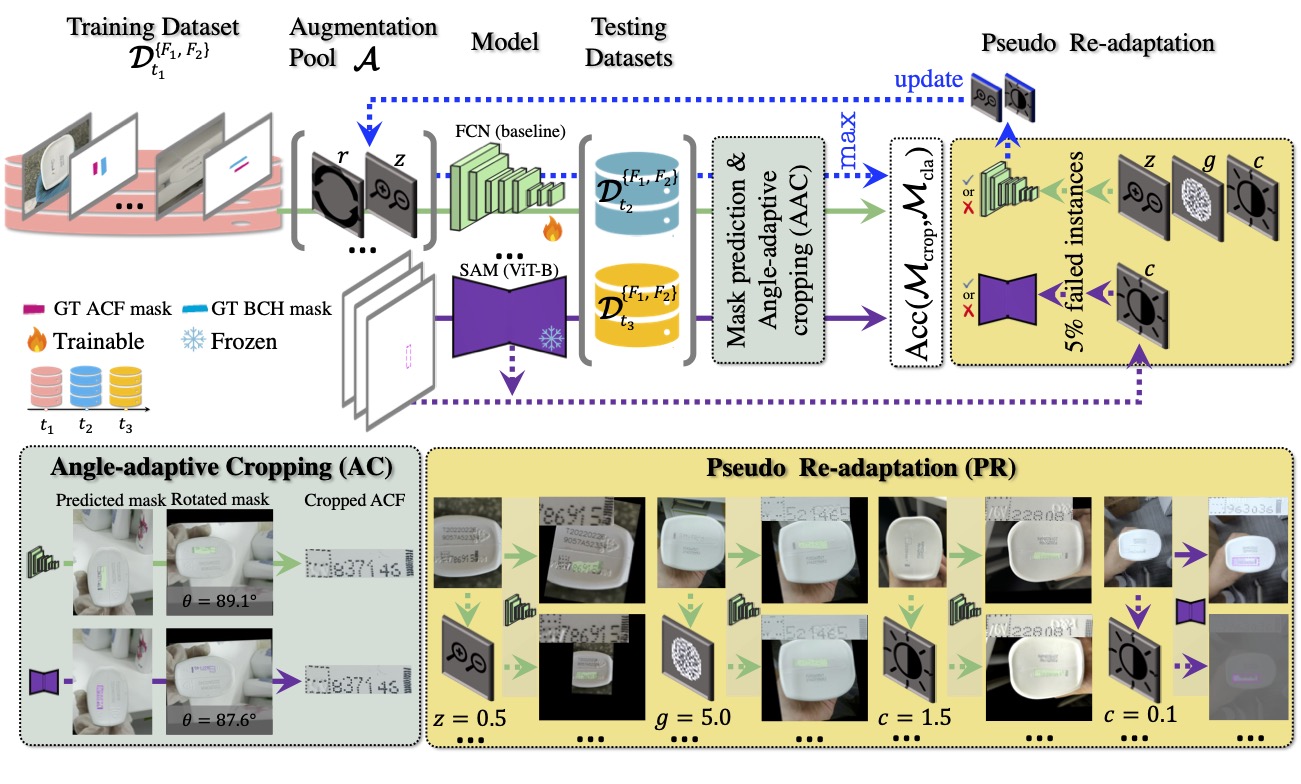

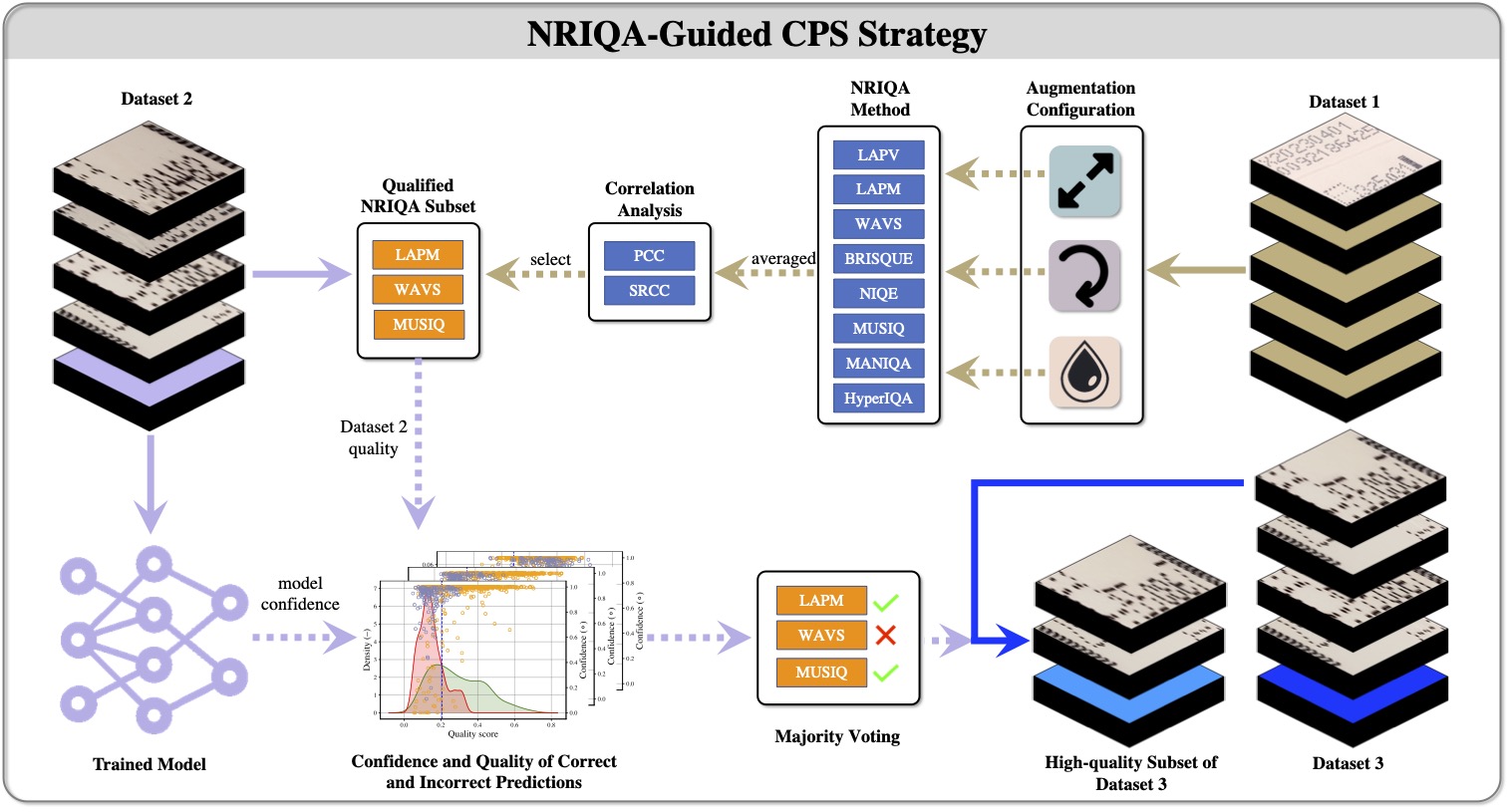

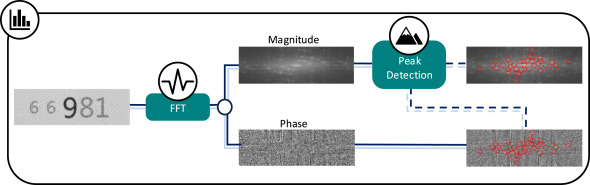

A walkthrough of the Fourier-domain image comparison pipeline extracted from my anti-copy pattern detection paper, now available as a standalone Python package.

- Why periodic structure is better compared in frequency space than pixel space

- How peak detection, local prominence, and entropy combine into a similarity score

- Tuning the pipeline for a new image domain