From Accuracy to Trust: Using Explanations to Debug Vision Models

Why Explainability Matters in Practice

For many computer vision projects, we report a single number like accuracy and move on. In real deployments, that is usually not enough. Two models with similar accuracy can behave very differently: one may rely on stable object cues, while another relies on shortcuts such as background texture, border artefacts, or lighting patterns.

If we do not inspect model attention, we risk shipping systems that look good in validation but fail under domain shift. Explainability methods help us check whether the model is learning the right reasons.

What I Focused on in My Research

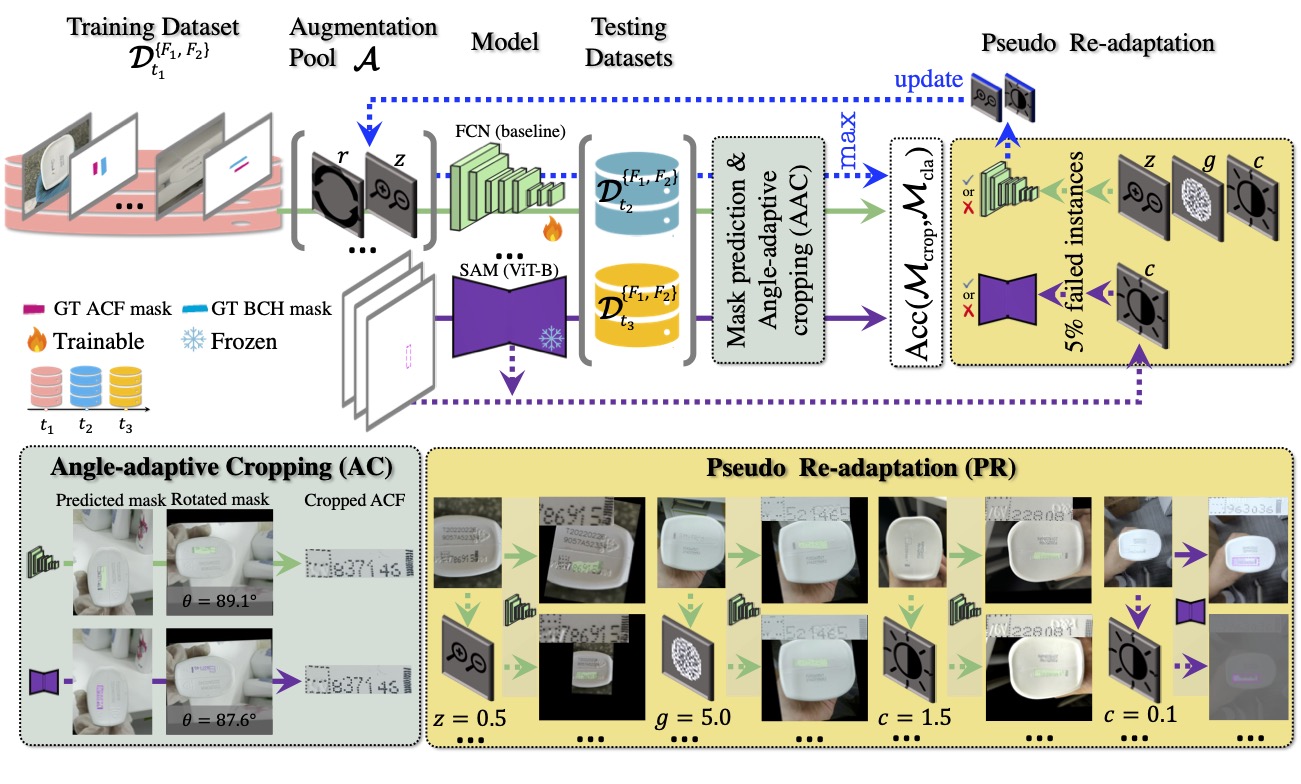

In my thesis work on robust fine-grained visual classification, I treated explainability as part of model evaluation, not just a visualization add-on. In particular, I used explanation maps to compare how training choices changed model focus and confidence behaviour across datasets.

This was especially useful when testing transfer-learning and re-adaptation pathways, where small preprocessing or data-order differences can change what the model attends to.

A Practical Explainability Workflow

- Train candidate models with different adaptation strategies.

- Select matched samples from easy, borderline, and failure cases.

- Generate explanation maps (e.g., Grad-CAM) per model/sample pair.

- Check whether attention aligns with task-relevant regions.

- Cross-check maps against confidence and per-class error trends.

This gives a stronger debugging loop than accuracy-only comparison.

What I Learned

- Attention quality predicts deployment stability: models focusing on relevant fine-grained regions were more robust under drift.

- Preprocessing choices can shift attention: seemingly minor choices (for example padding strategy) changed confidence and feature focus.

- Explainability helps pick adaptation strategy: when metrics were close, explanation maps often made the safer option obvious.

How to Use This in Your Own Pipeline

A lightweight policy that works well is: require each model candidate to pass both a metric threshold and an explainability sanity check on a fixed review set. This avoids selecting brittle models that happen to score well on one snapshot of data.

You can also archive explanation maps across model versions. Over time, this creates a useful history of how your system’s reasoning evolves as data and retraining strategies change.

Related Papers and Source

- Zuo, Z., Smith, J., Stonehouse, J., Obara, B. (2024). Robust and Explainable Fine-Grained Visual Classification with Transfer Learning: A Dual-Carriageway Framework. CVPR Workshop. arXiv.

- Selvaraju, R. R., et al. (2017). Grad-CAM: Visual Explanations from Deep Networks via Gradient-Based Localization. ICCV.

- PhD Thesis Chapter 4 (2025).